In previous posts, we’ve seen how to install ansible, set up inventory, create a basic playbook and use templates. Today we’ll look at roles.

Ansible roles are a way to reuse ansible code in other playbooks, so it’s a slightly advanced topic. It’s quite similar to a function in programming, or a perhaps a library. It’s just a way to avoid repeating yourself or copy/pasting code, which is almost never a good idea. You define a role in a separate directory in a YAML file. We’ll use two playbooks to show how to reuse a role in each one.

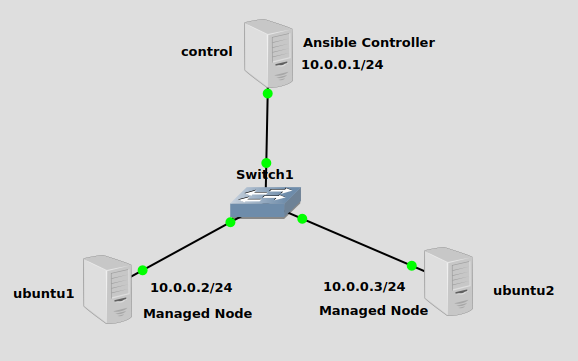

Topology

In the spirit of reusing stuff, I’m reusing the same topology from a previous post. We have a single subnet of 10.0.0.0/24, with the ansible controller sitting at 10.0.0.1 and two managed nodes at 10.0.0.2 and 10.0.0.3.

Inventory

If you’re unsure how to create inventory, check the post I created (link at the top) on doing that. You don’t have to put ansible files in any particular place, as long as you specify the inventory file and playbook on the CLI.

I have created a hosts file in the home directory on the ansible controller. I’ve already set up SSH key authentication, so no need for the user password. The hosts file looks like this:

servers:

hosts:

ubuntu1:

ansible_host: 10.0.0.2

ansible_user: james

ubuntu2:

ansible_host: 10.0.0.3

ansible_user: james

vars:

ansible_python_interpreter: /usr/bin/python3

Create a role

To create a role, we need to follow some rules that ansible has laid out for the directory and naming structure. First create a roles directory in the main ansible directory. Inside that, create another folder that can be named anything. The name of this second folder will be the name of the role itself. I’ll name mine rolesarecool.

mkdir -p roles/rolesarecool/tasks touch roles/rolesarecool/tasks/main.yml

Inside rolesarecool, create yet another directory called tasks. Inside tasks create main.yml. We’ll put our task(s) in this YAML file. This role is just for demonstration purposes, so it contains a single task and creates a file in the home directory called rolesarecool.txt.

- name: Create rolesarecool.txt

copy:

dest: ./{{ ansible_play_name }}_rolesarecool.txt

content: |

{{ ansible_play_name }} created this. Roles are cool, right?

You need to follow the directory structure roles/<your_role_name>/tasks/main.yml in order for this to work, as ansible will look specifically for it to be set up this way.

You might have noticed the task is not part of a larger play, i.e. there’s no hosts or gather_facts or tasks list. You just list out your tasks in your role, and they’ll be incorporated into the playbook/play that is using the role (you can have multiple plays in a playbook, but many have just one play).

In addition, I’ve used the built-in ansible variable {{ ansible_play_name }} which will originate from the playbook that is calling this role. This variable’s value comes from the name field, specified at the top of the play. We’ll see it in the next section. This way the role will create a unique text file and file name for each time it’s called.

Create two different playbooks

Now we will create two playbooks, each writing their own text file and also pulling in the role we wrote rolesarecool.

Back in the main ansible directory (where the hosts file is, in my case the is the user’s home directory) we’ll write the first playbook in playbook-a.yml. Mine looks like this:

---

- hosts: all

name: super_duper_play_A

gather_facts: false

tasks:

- ansible.builtin.include_role:

name: rolesarecool

- name: Create playbook-a.txt

copy:

dest: ./playbook-a.txt

content: |

playbook-a created this text file!

Notice the name field (super_duper_play_A), which will be used in the text file name when the role rolesarecool runs.

Then we’ll create the second playbook, playbook-b.yml. Mine looks like this:

---

- hosts: all

name: super_duper_play_B

gather_facts: false

tasks:

- ansible.builtin.include_role:

name: rolesarecool

- name: Create playbook-b.txt

copy:

dest: ./playbook-b.txt

content: |

playbook-b created this text file!

Same thing as the first playbook except I substituted “A” for “B” in various places. After running these two playbooks, a total of 4 text files should be created on both managed Ubuntu nodes:

playbook-a.txtplaybook-b.txtsuper_duper_play_A.txtsuper_duper_play_B.txt

The first two are created by each playbook, the second two are created by the role when it’s included by each playbook.

Last thing – there are several different ways to pull in role code to a playbook, here we’re using include_role. Each method has its own nuances, so be sure to check the official ansible documentation for further detail.

Run the playbooks

Here we go, let’s run both playbook-a.yml and playbook-b.yml:

james@james:~$ ansible-playbook -i hosts playbook-a.yml ---- PLAY [super_duper_play_A] ****************************************************** TASK [ansible.builtin.include_role : rolesarecool] ***************************** TASK [rolesarecool : Create rolesarecool.txt] ********************************** changed: [ubuntu2] changed: [ubuntu1] TASK [Create playbook-a.txt] *************************************************** ok: [ubuntu1] ok: [ubuntu2] PLAY RECAP ********************************************************************* ubuntu1 : ok=2 changed=1 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0 ubuntu2 : ok=2 changed=1 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

ansible-playbook -i hosts playbook-b.yml ---- PLAY [super_duper_play_B] ****************************************************** TASK [ansible.builtin.include_role : rolesarecool] ***************************** TASK [rolesarecool : Create rolesarecool.txt] ********************************** changed: [ubuntu1] changed: [ubuntu2] TASK [Create playbook-b.txt] *************************************************** ok: [ubuntu2] ok: [ubuntu1] PLAY RECAP ********************************************************************* ubuntu1 : ok=2 changed=1 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0 ubuntu2 : ok=2 changed=1 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

Looks like everything went through ok!

Verification

Let’s make sure the playbooks and role did what we expected. On the first managed node, let check the home directory:

ls -l ---- total 16 -rw-rw-r-- 1 james james 56 Nov 9 03:30 playbook-a.txt -rw-rw-r-- 1 james james 35 Nov 9 03:16 playbook-b.txt -rw-rw-r-- 1 james james 56 Nov 9 03:33 super_duper_play_A_rolesarecool.txt -rw-rw-r-- 1 james james 56 Nov 9 03:33 super_duper_play_B_rolesarecool.txt

Let’s check their contents:

cat *.txt ---- playbook-a created this text file in its own task list! playbook-b created this text file! super_duper_play_A created this. Roles are cool, right? super_duper_play_B created this. Roles are cool, right?

Looks good! Checking the second managed node should produce similar results.

Happy automating!